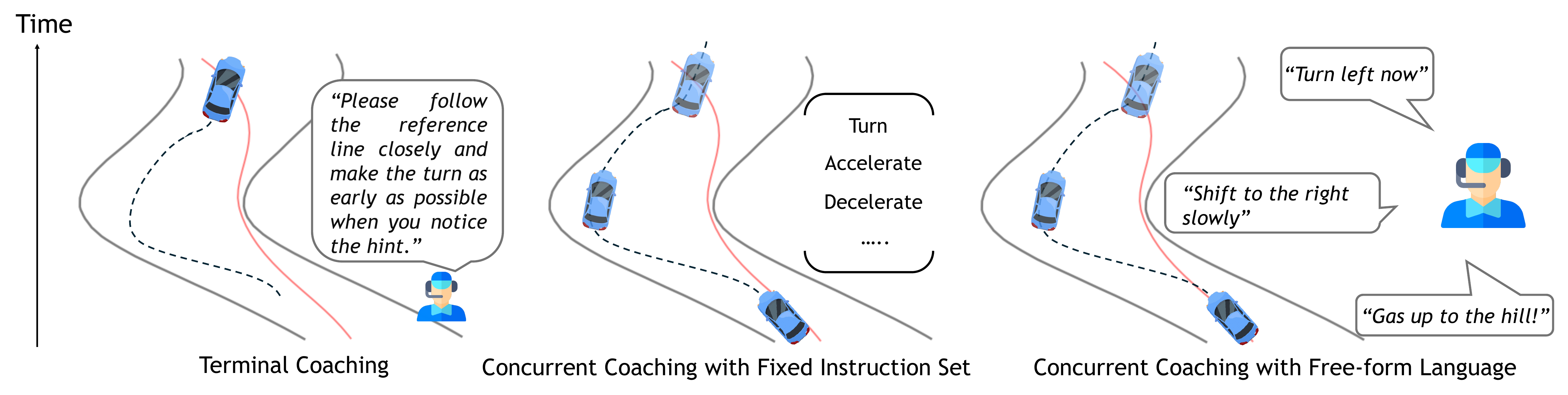

Real-time, language-based coaching of human physical skills has the potential to accelerate skill acquisition in fast-paced domains such as driving, sports, and surgery. Building an effective AI coach is difficult because it must decide both when to intervene and what to say. Traditional methods rely on fixed rules and instruction sets, while large language models can generate more flexible feedback but struggle with real-time responsiveness and domain-specific supervision.

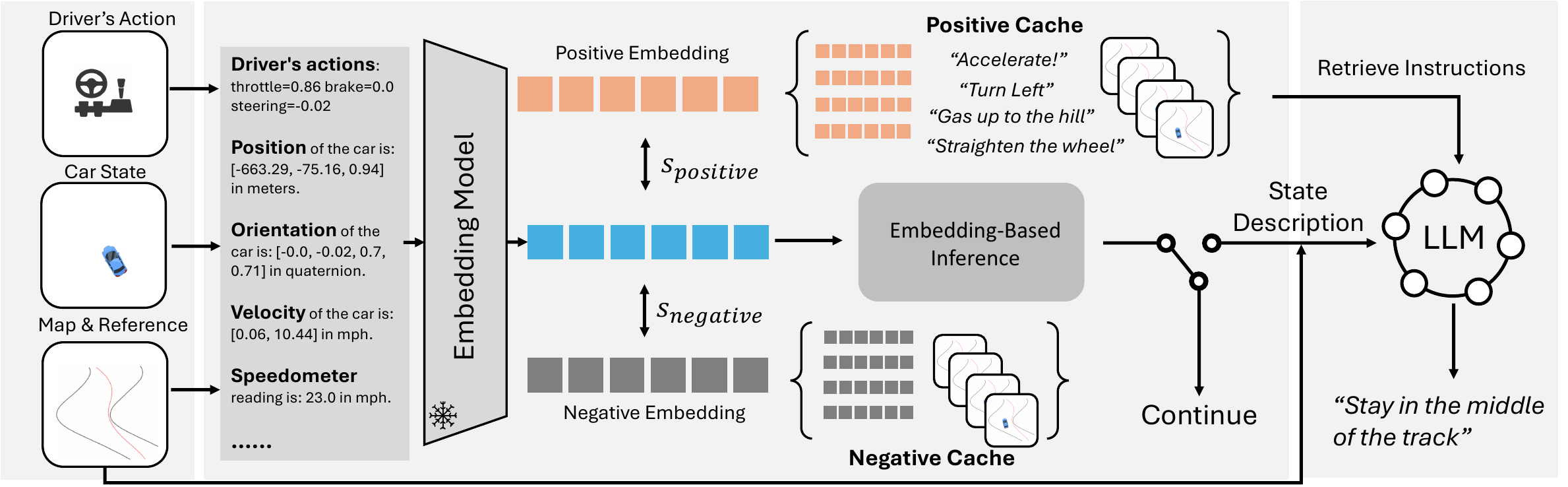

StreamCoach decomposes coaching into two stages: a fast stage that detects intervention opportunities by comparing the learner's current state embedding to past coaching moments, and a slower retrieval-augmented generation stage that produces context-aware instructions from relevant prior examples. In a high-performance driving domain, StreamCoach improves both feedback timing and instruction quality, suggesting a scalable framework for concurrent coaching with language.

The same semantic representation supports both rapid timing decisions and grounded feedback generation.

StreamCoach was evaluated on a simulated high-performance driving dataset with expert coaching annotations.

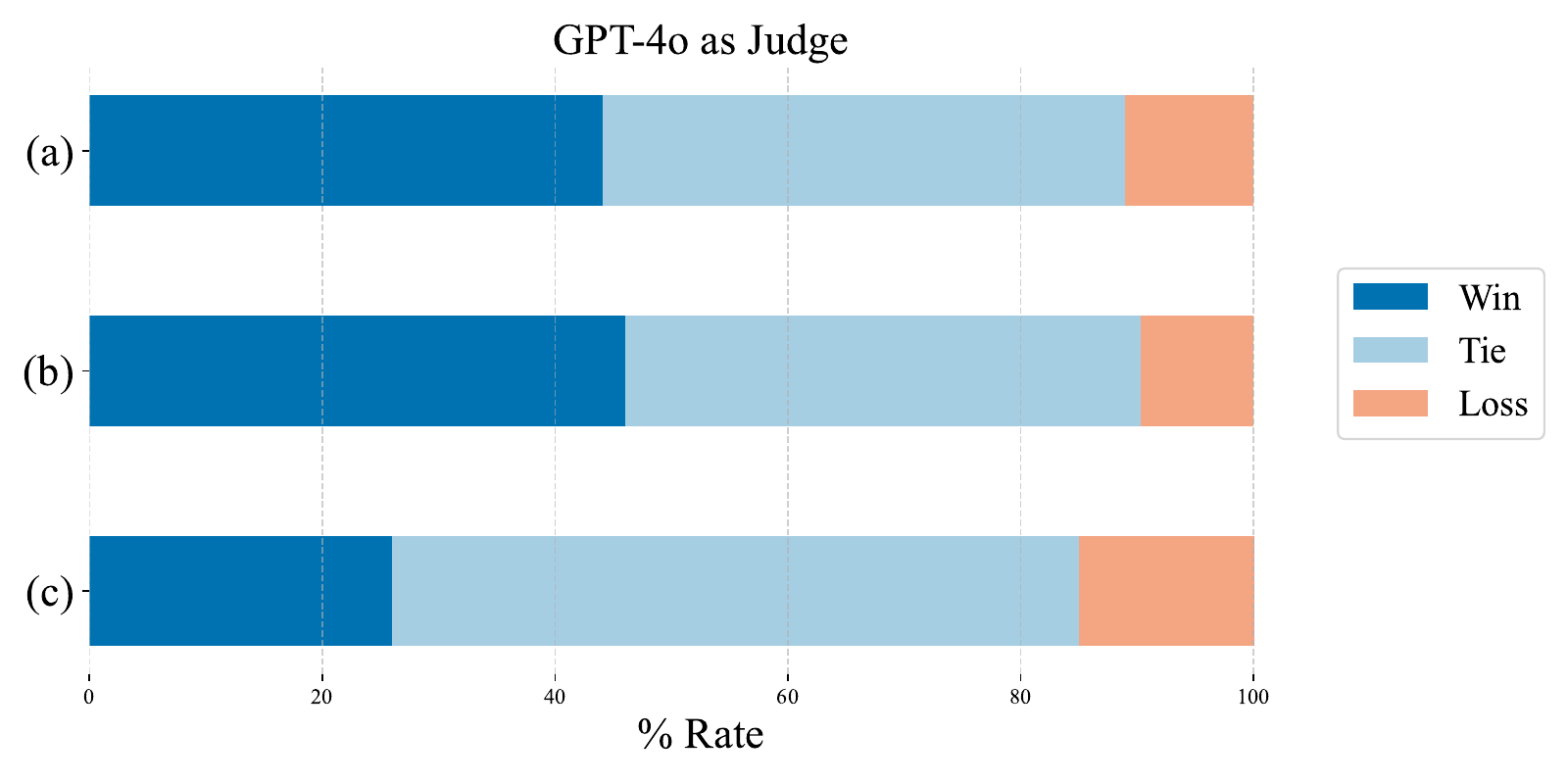

Instruction quality judged against baselines.

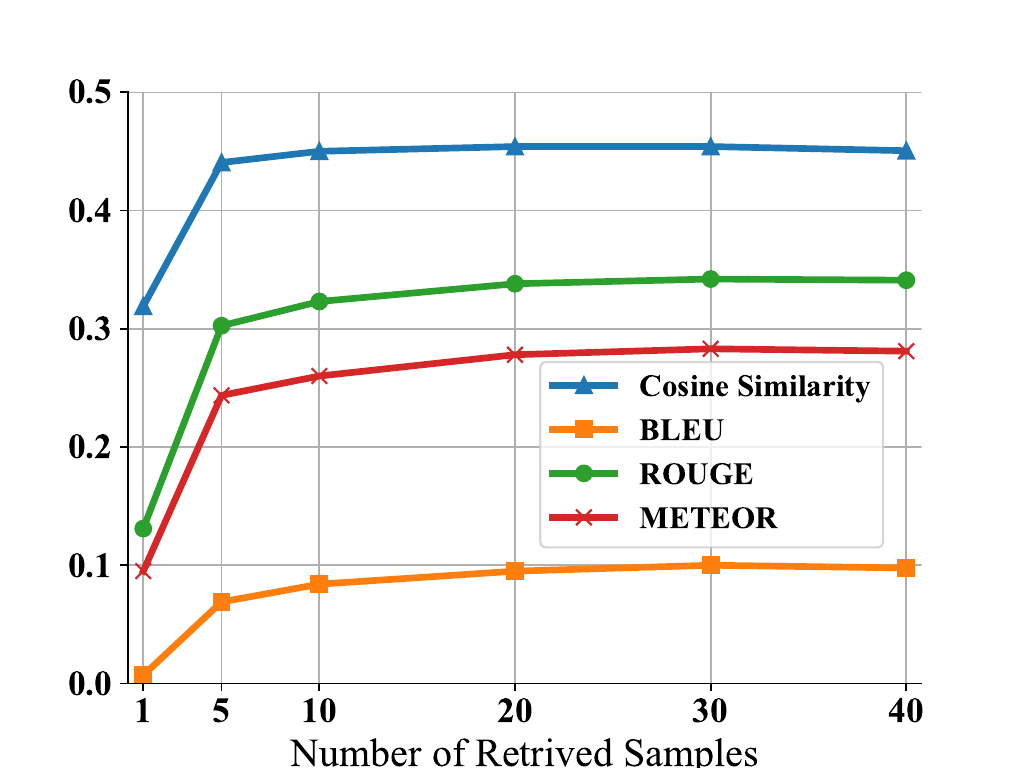

Ablation on retrieval context size.

@article{yu2025streamcoach,

title = {Real-Time Coaching of Human Physical Skills with Large Language Models},

author = {Yu, Cunjun and Gopinath, Deepak Edakkattil and Silva, Andrew and Tambwekar, Pradyumna and Sumner, Emily and Dees, Laporsha and Rosman, Guy and Leonard, John J. and Balachandran, Avinash and Hsu, David},

year = {2025}

}