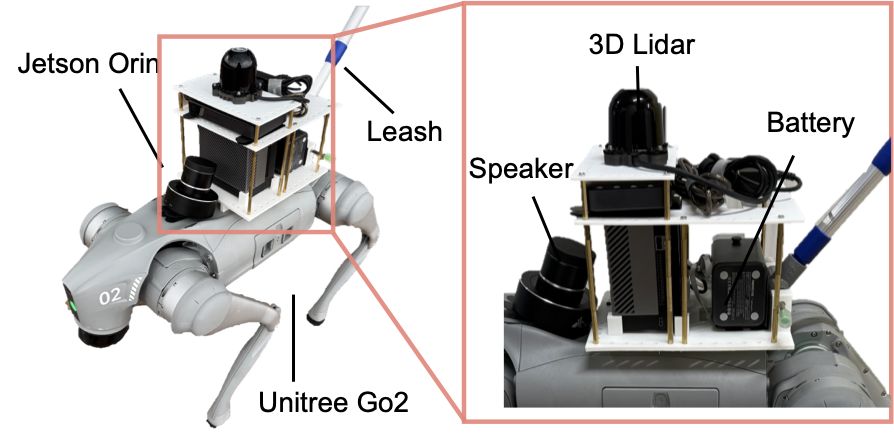

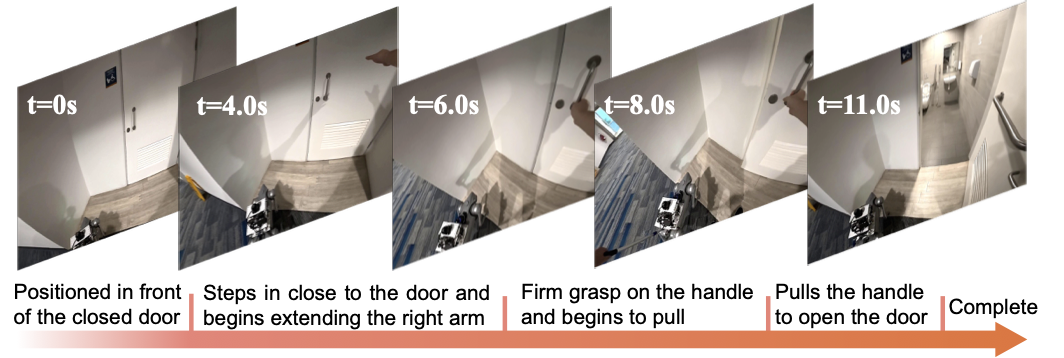

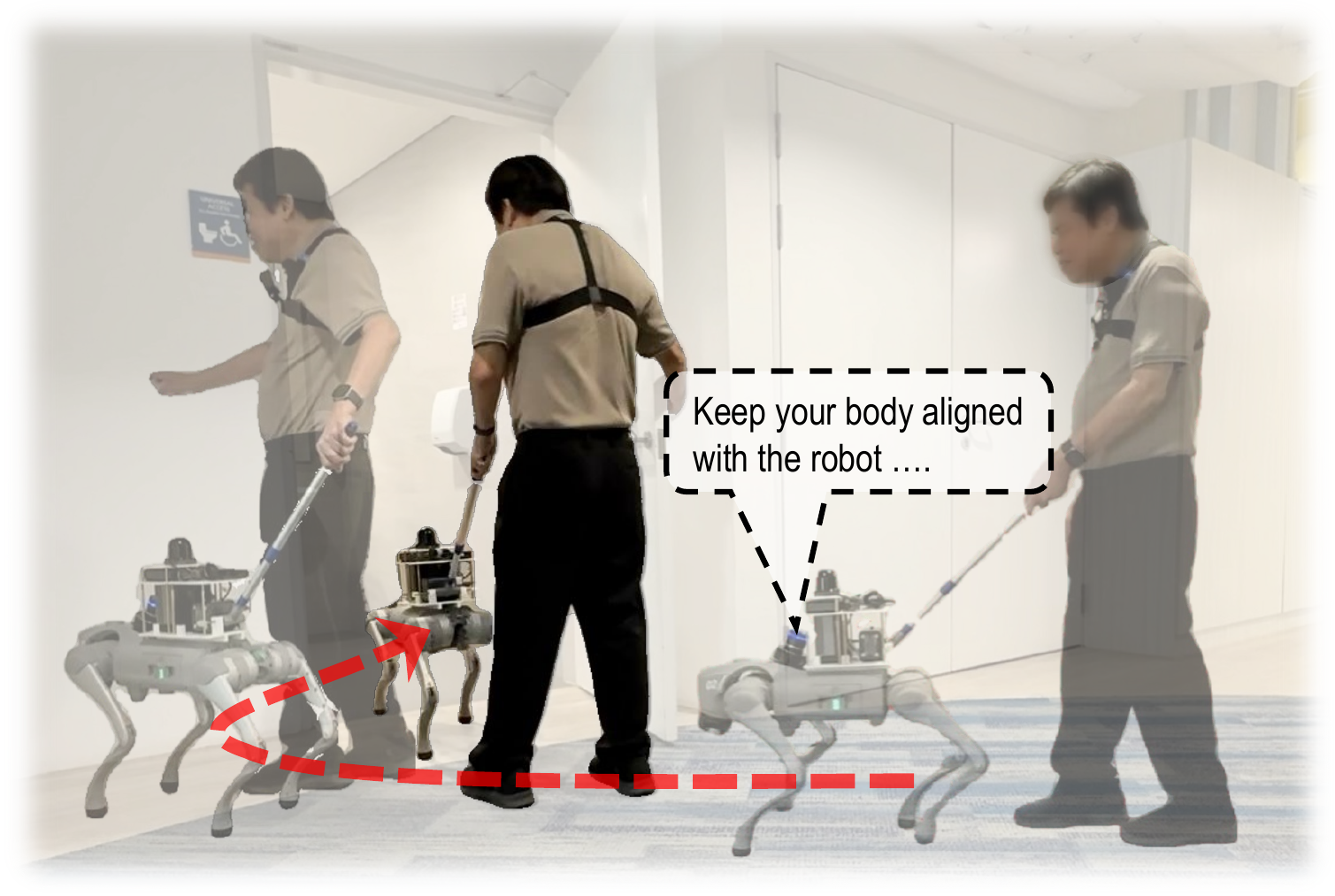

The platform uses a Unitree Go2 quadruped robot with onboard computation, LiDAR-based navigation, a rigid leash interface, a chest-mounted action camera for first-person observation, a microphone for user feedback, and a speaker for generated instructions.

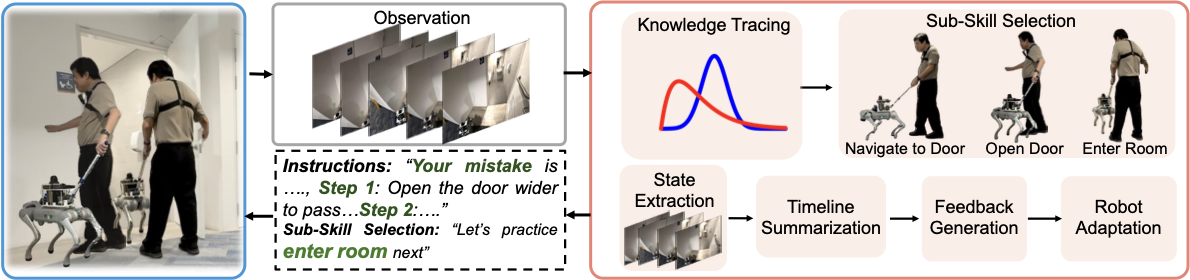

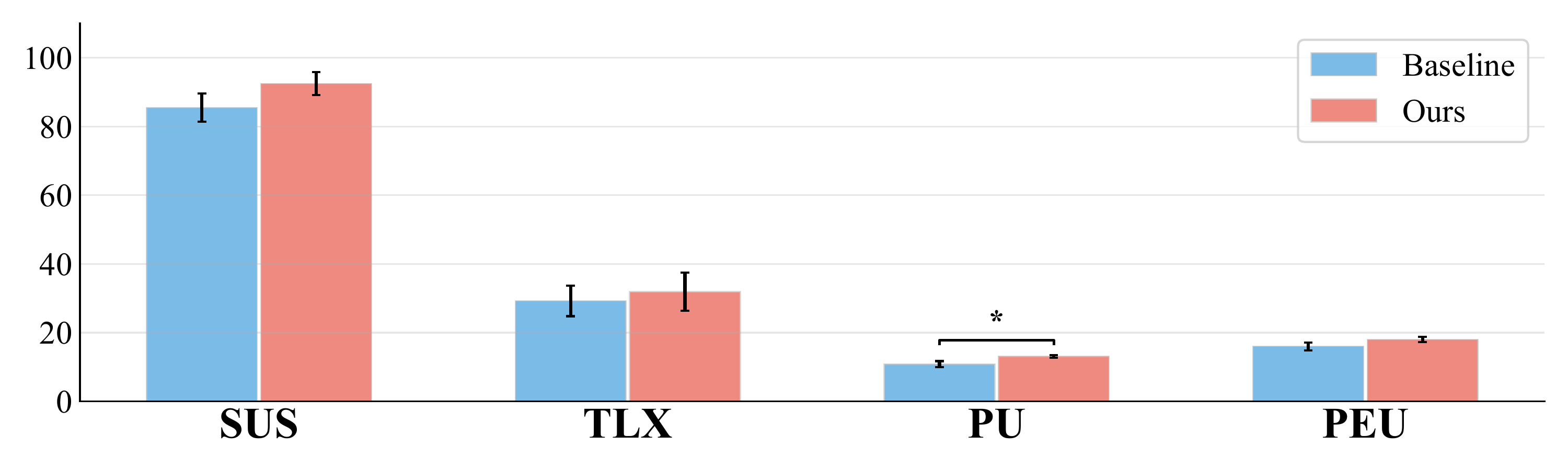

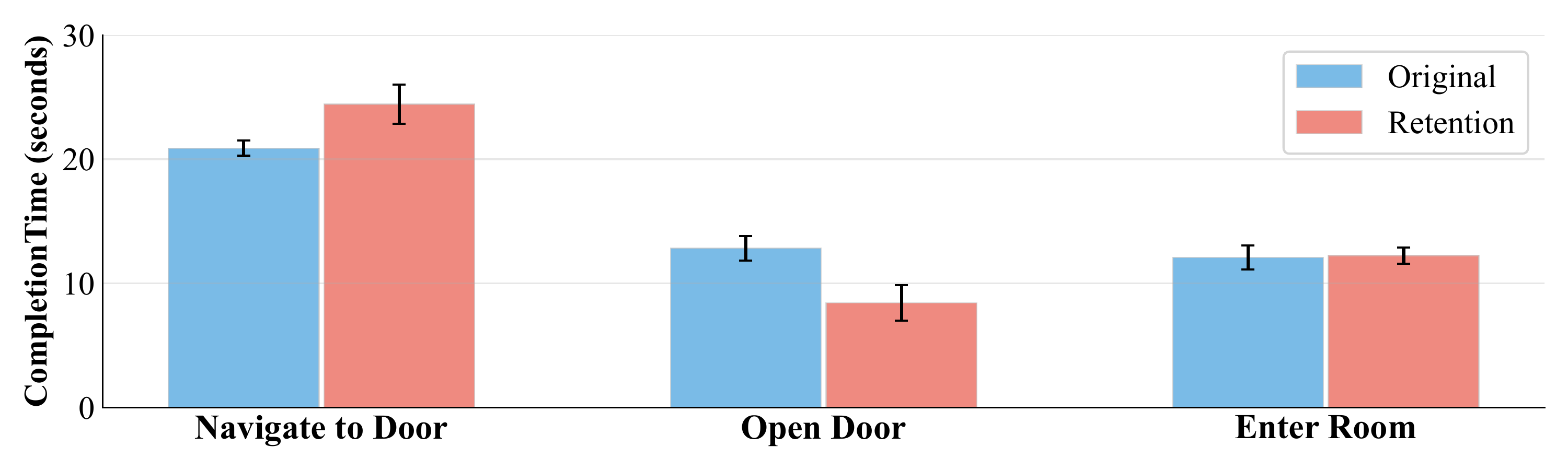

The navigation task focuses on doorway interaction, where users must coordinate position, timing, door handling, and robot-following without visual feedback.